WHERE HAS INNOVATION GONE? By Michael S. Malone

Where has innovation gone?

I had an odd moment earlier this week. For many years now, I’ve been involved in the creation of a historical museum in my hometown, Sunnyvale, California. In fact, you can say that I’ve been part of this project since I was 12 years old, when, in researching a Boy Scout merit badge I happened to leave some library books lying around . . .and my father read them.

That set my old man on a quarter-century quest to build Sunnyvale a new museum, which continued after his death – with my mother as one of the lead benefactors – until last fall. That’s when, to great ceremony, the new Sunnyvale Historical Museum – built as a replica of the city’s (and essentially, California’s) first American home – finally opened. It was quite a day, not least because of the unforgettable sight of my youngest son escorting his 87 year-old grandmother into the exhibit room named after her and his grandfather.

Like most community museums, the Sunnyvale museum struggled to the financial finish line. As a result, the Museum task force had to make some tough decisions about exhibits. It chose, wisely, to fill the first floor with exhibits from the early Indian/Mission/Orchards era of the city (and Santa Clara Valley) and leave the second floor – to be dedicated to modern Sunnyvale as the heart of Silicon Valley – empty until enough money could be found.

Helping to create the first exhibit on the second floor was what brought me to the Museum this week. The goal is to create a massive timeline of the history of the electronics revolution that will cover one thirty foot long wall with key milestones in everything from semiconductor chips to avionics to software – noting the many inventions (such as the microprocessor, the video game and the Apple computer) that had roots in Sunnyvale.

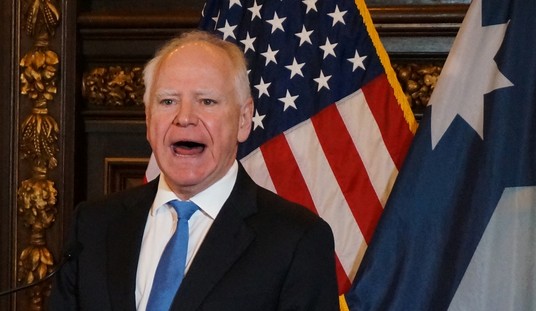

The two Silicon Valley ‘experts’ on the task force are myself and Regis McKenna, the Valley’s most famous marketing and PR guru (think Intel and Apple). Regis, who is now retired, is a generation older than me and someone I’ve always considered – I’ve known him as a neighbor since I was a teenager – to be fount of wisdom and advice, especially about the sociology of high tech.

As you can imagine, from the guy who introduced both the Intel 8080 and the Macintosh, Regis has a lot of experiences to share.

It was my job to design the timeline and populate it with the hundred or so major milestones of the electronics/digital revolution, from the programmable jacquard looms of the late 18th century to the iPhone. And as I laid out the grid and began to populate it with items, my biggest concern was that ancient end of the timeline would be so empty and the modern end so stuffed with events that the layout would look distorted and visually unbalanced.

And indeed, one part of the chart did look stuffed with milestone inventions – but it wasn’t in the present, but rather, during the era from about 1967 to 1977. The microprocessor, the Internet, calculators, video games, the personal computer, the cellular phone, etc. The fact that history seemed to be building up to that era – especially technology’s Annus Mirabilus of 1969 – came as no surprise. What did surprise was that in the years after that, right up the present, how that efflorescence of innovation seemed to fade.

I remarked on this to Regis, reminding him that fifteen years ago he warned me that the U.S. wasn’t making sufficient investment anymore into basic scientific research, that we were ‘eating our seed corn’, and living off the hard work done in solid state physics, fiber optics and networks decades before. Perhaps, I told him, we are looking at evidence that his worst fears have come true.

Regis didn’t entirely agree, suggesting that some of today’s tech innovation is simply less visible, not hardware but software code and clever new algorithms.

Perhaps, but I’m not convinced. After all, it seems to me that even in software, most of the really important innovation happened as well during those ten years beginning in the mid-Sixties, as well as before during the mainframe computer era. What software innovation we’re seeing today is largely in the form of applications . . .and one would be hard-pressed to name, say, an iPhone app that will leave its mark on history the way the vacuum tube or oscilloscope did.

What didn’t need to be said between us – Regis is, after all, a veteran angel investor in new tech start-ups – is that, between growing government regulation and the current economic crisis, there simply isn’t money out there to finance the next generation of hot new entrepreneurial start-ups and high risk corporate ‘skunkworks’ projects that produce the really great inventions and products. VC’s these days are running scared, their business model ruined in a world without IPOs, putting their money in only the safest, low-risk/low-return deals. And that isn’t how you fund the next tech revolution.

By coincidence, a couple days later, I had lunch with another Silicon Valley pioneer and legend, Federico Faggin, co-inventor of the microprocessor and the man behind the computer touch pad on which my thumb now rests.

I told him about my surprising findings with the timeline. Is it possible, I asked, that I was missing something? Yes, said Faggin, you need to keep in mind that most of the sectors of what we consider to be electronics, such as semiconductors, have already passed through their phases of great innovation and are now mature industries dedicated to perfect their inventions, not replacing them. Where you should look now, he suggested, are in places like nanotechnology, biotech and medical technology that are still on the steep part of the innovation curve.

Once again, perhaps. But even if that is true, I don’t find it particularly consoling about our future. For one thing, are those industries of sufficient size and importance to make up for the stagnation of all of the rest? Can they keep our economy healthy and vital?

Now, add to this the fact that these new industries are hardly immune to the dry-up of capital taking place everywhere else – not to mention the loss of the crucial liquidation event once made possible by ‘going public’ – and it is hard to see how, no matter how creative their scientists and entrepreneurs, those businesses are going to fund the great innovations that are supposed to lie in their futures. Even more disturbing is the possibility that this economic downturn will become attenuated through mismanagement by Washington, while other, more rapidly recovering, nations such as China (which applied its stimulus package quickly and decisively) will use the opportunity to race ahead.

But the coup de grace to American innovation may be health care reform. As a growing number of pundits have begun to point out: Whatever the merits of the Obama Health Care plan, it is hard to make the case that it will do anything but suppress innovation in medicine, pharma, and biotech.

What no one seems to have noticed yet – and which just struck me this week – is that this loss of innovation will occur in precisely those industries in the U.S. economy where we are depending upon innovation to occur. It is in just those fields (nanotech aside) that we are likely to depend upon for our economic growth, job creation, and competitiveness.

That’s a very frightening scenario, suggesting a kind of living death economic recession from which we never fully emerge. Shouldn’t we at least discuss this unforeseen danger before we ram through a trillion dollar bill that nobody seems to have read?

Join the conversation as a VIP Member