The final frontier of social control is, as the World Economic Forum technocrats have explained ad nauseam, inside of the human body.

“Imagine the applications of that, the compliance,” Pfizer CEO Albert Bourla fantasized at the World Economic Forum a few years back in the context of a microchip inserted into medication that reports to Pfizer when the patient has taken it.

In addition to autonomy over what medications they take or not, allowing the slaves their own private, internal thoughts is too much of a threat to permit in the coming dystopia.

Thought control has always been a prominent feature of totalitarian despots since the dawn of history, but they have been historically forced to rely on lo-fi techniques like propaganda and book burning.

Technology is opening up whole new avenues for them.

In George Orwell’s 1984, the technocrats installed telescreens everywhere, even in the Party members’ homes, to monitor their facial expressions day and night:

The telescreen received and transmitted simultaneously. Any sound that Winston made, above the level of a very low whisper, would be picked up by it; moreover, so long as he remained within the field of vision which the metal plaque commanded, he could be seen as well as heard. There was of course no way of knowing whether you were being watched at any given moment.

But even this technique relies on an analysis of facial expressions and verbalizations to determine whether the slave is thinking subversive thoughts. It can be fooled by a dissident, as Winston did throughout his life, by careful management of words and expressions.

In reality, as Brave New World author Aldous Huxley explained to Orwell in a letter following the publication of 1984, the final revolution of thought control will turn out to be much more technical and precise.

Via University of Texas:

A new artificial intelligence system called a semantic decoder can translate a person’s brain activity — while listening to a story or silently imagining telling a story — into a continuous stream of text. The system developed by researchers at The University of Texas at Austin might help people who are mentally conscious yet unable to physically speak, such as those debilitated by strokes, to communicate intelligibly again.

The study, published in the journal Nature Neuroscience, was led by Jerry Tang, a doctoral student in computer science, and Alex Huth, an assistant professor of neuroscience and computer science at UT Austin. The work relies in part on a transformer model, similar to the ones that power Open AI’s ChatGPT and Google’s Bard.

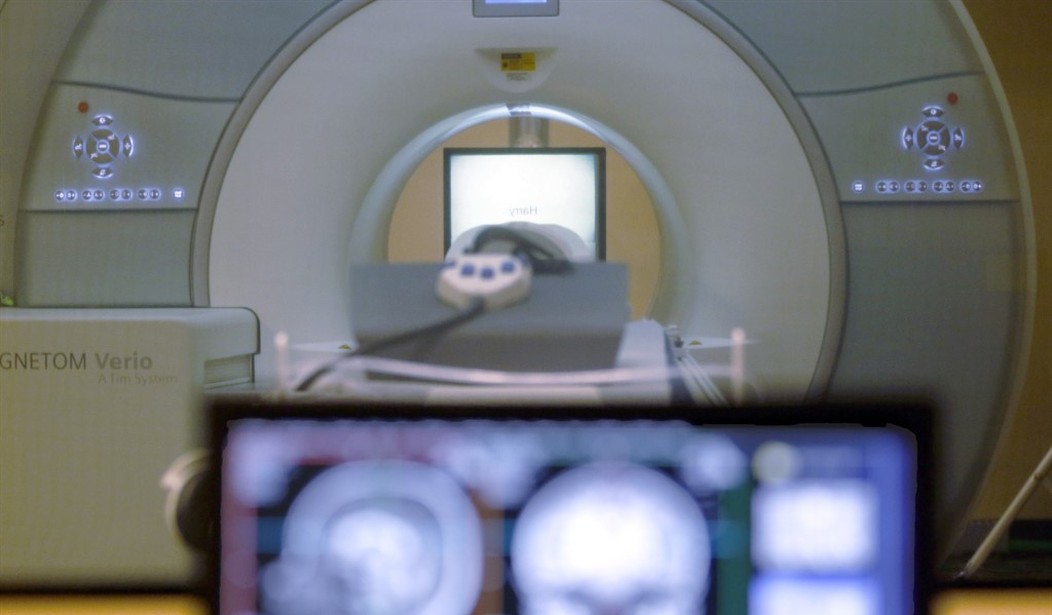

The researchers used a functional MRI (fMRI) machine to transcribe the thoughts into text, requiring no electrodes or any sort of invasive technique whatsoever.

Join the conversation as a VIP Member