A couple of AI chatbots got tangled in a web (no pun intended) of misinformation, full of self-referential citations — based on nothing and circulated widely across the internet in a digital echo chamber.

It’s turtles all the way down.

Via The Verge:

Right now, if you ask Microsoft’s Bing chatbot if Google’s Bard chatbot has been shut down, it says yes, citing as evidence a news article that discusses a tweet in which a user asked Bard when it would be shut down and Bard said it already had, itself citing a comment from Hacker News in which someone joked about this happening…

What we have here is an early sign we’re stumbling into a massive game of AI misinformation telephone…

Given the inability of AI language models to reliably sort fact from fiction, their launch online threatens to unleash a rotten trail of misinformation and mistrust across the web, a miasma that is impossible to map completely or debunk authoritatively. All because Microsoft, Google, and OpenAI have decided that market share is more important than safety.

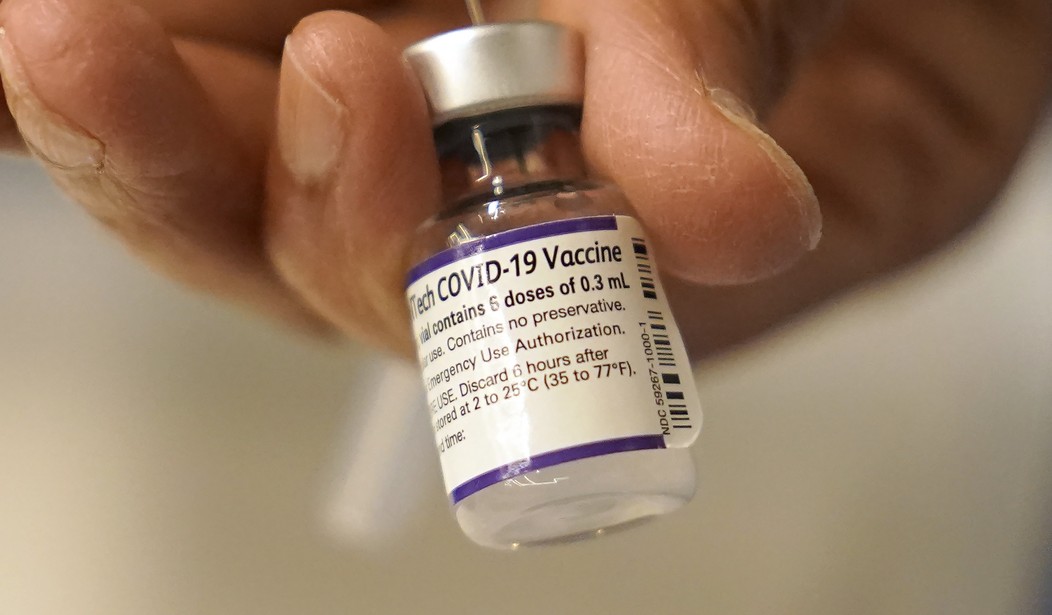

Here we have it: the old “safety”/”security” excuse to police information. You’ll recognize this as the justification for COVID-19 censorship: the “anti-vaxxers” were allegedly harming something called “public safety” by stating factual information about the inefficacy and, ironically, unsafety of the mRNA jabs deceptively marketed as “vaccines” — which has been vindicated in spades by what actual, unbiased scientific research is still allowed to occur in the West.

This is, beyond the hypocrisy, the fundamental problem: AI is a serious and even possibly existential threat to humanity. Pumping out misinformation is the least of such threats it poses. It needs to be reined in for judicious use. But who is going to enforce that? The companies won’t; they’re obsessed with maximum capitalization via innovation with no brakes. The government has already proven it can’t be trusted. Where does one turn?

The Chinese Communist Party solution to the AI misinformation problem is more AI.

Via AI News:

China’s Cyberspace Administration (CAC) has launched a campaign to combat fake news generated by AI.

The crackdown is focused on news providers, including short video platforms and popular search lists.

The CAC specifically highlighted manipulative practices such as the use of AI virtual anchors, forged studio scenes, fake news accounts mimicking legitimate ones, and the manipulation of news to create misleading storylines. These practices are employed to generate what’s often known as clickbait.

—————————————-

This might seem an unrelated tangent, but if you follow the Brandon entity’s administrative rhetoric, you will notice something novel about it. The diversity hire Karine Jean-Pierre, primary presidential mouthpiece, regularly punts away her own boss’s nominal authority over the administrative state to the various departments and agencies under his purview. The Brandon entity, in other words, goes out of its way to give away its raw political power enumerated for it in the Constitution as the elected leader of the executive branch.

I have previously written about the phenomenon in greater detail elsewhere.

“Psaki: We are always going to be guided by our North Star — and that is the CDC and our health and medical experts.”

…

Reporter: The Delta variant is already dominant in the U.S., so how does keeping people from foreign countries out, like, protect people in the U.S.?

…

Psaki: I’m not a doctor or a medical expert. I think that that would best be posed to a member of the CDC… But I think their decision was made based on the fact that the Delta variant is more transmissible.”

–White House Press Briefing, July 26, 2021

The ultimate aim of this is to slowly transition away from representative government to total technocracy, with the authority over decision-making outsourced completely to AI. In the end, the human will be fully extricated from the political process.