1. Is obesity a disease or a moral failing?

And what are we to make of the fact that an affliction of the rich is now predominantly a problem of the poor?

Obesity – being very fat – is a condition that is at the much disputed border between medicine and moral weakness. No one doubts that being very fat is bad for you, that is to say has deleterious consequences as far as pathology and life expectancy are concerned, to say nothing of aesthetics, but is it a disease in itself, and are doctors their patients’ keepers? To this no final answer can be returned, for it lies not in the realm of physic but of metaphysic. One answers as much according to one’s philosophical predilections and presuppositions as to empirical evidence.

Many people take obesity as a mass phenomenon (if I may be allowed a little pun of doubtful taste), not just among the American but among the world population, as evidence that people are not really responsible as individuals for what they put into their mouths, chew, and swallow, but rather victims of something beyond their control. If they are not so responsible, of course, it is rather difficult to see what they are or even might be responsible for. But the impersonal-forces point of view is well expressed in an editorial in a recent edition of the New England Journal of Medicine by a public health doctor and an expert in “communication,” by which I suppose is meant advertising and propaganda.

The concern [about the increasing obesity of the population] prompted the recent Institute of Medicine (IOM) report, “Accelerating Progress in Obesity Prevention: Solving the Weight of the Nation.” The groundbreaking report and accompanying HBO documentary, “The Weight of the Nation,” present a forceful case that the obesity epidemic has been driven by structural changes in our environment, rather than embrace the reductionist view that the cause is poor decision making by individuals.

There follow in the editorial, as perhaps one might expect, a few paragraphs of managerialese, whose only moral principle is that it is vital not to stigmatise the fat because then they might feel bad about being fat. It is a bad thing, ex hypothesi, to be fat, but apparently an even worse one to feel bad about being fat – a feeling that might, I suppose, lead fat people to eating more Krsipy Kreme doughnuts. Once a certain point is reached, then, people are not fat because they eat, but eat because they are fat. Nietzsche would have found this reversal of causative relationship interesting.

As everyone knows, there has been another historical reversal: obesity was once the problem of the rich, but now, epidemiologically speaking, it is a problem of the poor. Curiously enough, in the light of a general denial of personal responsibility for conduct, there have of late been increasing attempts by doctors and public health authorities to pay, or to bribe, the poor into behaving healthily, for example by giving up smoking. There have even been experiments to get drug addicts to give up by means of payment, either in cash or in kind. A certain success has been claimed for these methods; and thus, as the British Medical Journal put it in the same week as the NEJM editorial, “Using cash incentives to encourage healthy behaviour among poor communities is being hailed as a new silver bullet in global health.”

But cash payments can work only if people are capable of making choices. How then do we resolve the contradiction? I hesitate to quote a doctor of philosophy rather than of medicine, but here is what Karl Marx had to say:

Men make their own history, but not just as they please; they do not make it under circumstances chosen by themselves, but under circumstances directly encountered, given and transmitted from the past.

Only public health doctors and experts in communication are free of such constraints, and they make history for other people.

2. Should an alcoholic be allowed a second liver transplant?

Considering the question of how to allocate a scarce resource: human organs.

In private health-care systems, rationing of health care is by price; in public health care it is by waiting lists and administrative fiat. Both have their defenders, usually ferocious and bitterly opposed, but the fact remains that there are some treatments that have to be rationed however much money is available for health care: as when, for example, there are more people needing organ transplants than there are organs to be transplanted. Few people would be entirely happy to allocate organs merely to the highest bidder.

A recent article in the New England Journal of Medicine tackles the problem of allocation of lung transplants. A system was in place in the United States that excluded children under 12 years of age from receiving adult lungs as transplants, an exclusion that parents of a child with cystic fibrosis challenged in the courts. The problem for children under the age of 12 requiring lung transplants is that there are very few child donors, so in effect the system discriminated against them.

The reason for the exclusion was that most children for whom lung transplants are considered have cystic fibrosis, a condition for which the results of such transplants are equivocal given the constantly improving medical treatment of the disease. Moreover, children are especially liable to complications from the procedure, though these can be partially overcome by using not whole adult lungs for transplant but only resected lobes of them.

The American system of allocation of lungs for transplant into adults takes into account various factors, such as years of potential benefit from transplant, the imminence of death without transplant, the statistical chance of success of transplant, and so forth. Ability to pay does not come into it; in other words it is a socialized system, but there is a mechanism of appeal for those relegated to low priority which the more educated and wealthier are better able to take advantage of. No explicit judgment is made about the relative social or economic worth of the individual, however, for that way madness, or at least extreme nastiness, lies. And the authors of the article think that, on the whole, the system works well, for it seems to stand to reason that those who would benefit most should go to the top of the waiting list.

I once had a small transplant dilemma of my own…

A newspaper in England asked me to write an article, for what for me was a considerable sum of money, to opine that a certain very famous soccer player, who had turned severely to drink after his retirement, should not be given a second liver transplant, the first having failed because of his continued drinking. The player in question was not admirable, but he did say one memorable thing. Impoverished by his habits, an interviewer asked him where all his money had gone. “Wine, women and song,” he replied. “The rest I wasted.”

I told the newspaper that, as a practising doctor, I could not possibly write an article saying that a named person should be left to die without potentially life-saving treatment.

“Do you know a doctor who would write it?” the editor asked.

“I hope not,” I replied.

I was not being quite honest. In my heart I did not think that the man should have a second transplant (in the event, he did get it and, all too predictably, it went the way of the first). It was not only that I did not think he would give up drinking, a matter of statistical likelihood; but I also thought that he did not deserve his second chance, especially if it meant that someone else was deprived of a first. But doctors treat diseases, not the deserts of their patients.

3. Are psychiatric disorders the same as physical diseases?

Even when their causes are organic, our minds make us who we are.

A great deal of effort has gone into persuading the general population that psychiatric conditions are just like any others: colds, arthritis, and so forth. I have never found this convincing; psychiatric disorders, including organic ones, are precisely what it is that makes us most ourselves. No one boasts that his symptoms are of psychological origin, though any of us may suffer such symptoms.

In 1982, the neurologist and writer Oliver Sacks wrote a book A Leg to Stand On, in which he described an accident while walking in Norway. He injured the tendon of one of his thigh muscles which was repaired by operation; but afterwards he found that he could not walk because he could not move his leg. He had “forgotten” how to do so. In addition, he no longer experienced his leg as part of himself, but as a completely alien object.

In his book, he rejected the hypothesis that his paralysis was hysterical, that is to say by unconscious mental conflict. Rather he preferred to believe that his peripheral nerve and muscle injuries had somehow affected his brain, and therefore his inability to move his leg was not psychological but physical.

In the latest edition of the Journal of Neurology, Neurosurgery and Psychiatry, three neuroscientists, including a neurologist and a psychiatrist, reinterpret Sacks’ symptoms and say that they were indeed psychogenic, or what used to be called hysterical. They say that his pattern of symptoms was incompatible with a purely neurological explanation, indeed that they were typical of hysterical paralysis, though they emphasize that this does not mean in the least that they were “fake” or “imaginary.” As a 19th-century doctor put it of female patients with hysterical paralyses (most patients with such paralyses were female): “She says, as all such patients do, ‘I cannot’; it looks like ‘I will not’; but it is ‘I cannot will.’’’

Sacks was given the right of reply to the authors and he sticks by his original contention that the paralysis from which he suffered was not psychological in origin. One has the impression that he does not merely disagree with this idea, but finds it uncomfortable and does not like it. Even if hysterical paralysis existed, it would be confined to others.

A third paper in the same journal by the Professor of Cognitive Neuropsychiatry at the Maudsley Hospital Anthony David has an interpretation that might not have pleased Dr. Sacks:

Is it not one of the mechanisms whereby a minor injury can lead to major disability that it sows the seeds of what it might be like to be disabled and hence to be looked after, pitied, lionised? None of us is immune to this but like Sacks perhaps, most having glimpsed what life might be like on the “other side” returns with haste to the land of the healthy.

We have two attitudes to psychological vulnerability: either we assume it to an extraordinary degree to demonstrate our superior sensitivity, or we deny it altogether, believing ourselves to be completely invulnerable.

I tend to the latter; but once learned differently when I was in a distant land famed for its inefficient bureaucracy. There was a problem with my ticket home and it took days to sort out. During those days I suffered from severe and almost incapacitating backache that I did not connect to the problem with my ticket. The backache, which was severe, disappeared, however, within minutes of the problem having been sorted out. As Dr. Chasuble put it in The Importance of Being Ernest:

Charity, my dear Miss Prism, charity! None of us are perfect. I myself am peculiarly susceptible to draughts.

4. Do doctors turn their patients into drug addicts?

Prescription is quick and lucrative, while encouraging the patient to forego his drugs is difficult, time-consuming and ill-paid.

When I was a young doctor, which is now a long time ago, patients who were close to death were often denied drugs like morphine for fear of turning them into addicts during their last weeks of earthly existence. This was both absurd and cruel; but nowadays we have gone to the opposite extreme. We dish out addictive painkillers as if we were doling out candy at a children’s party, with the result that there are now hundreds of thousands if not millions of iatrogenic — that is to say, medically created — addicts.

An editorial in the New England Journal of Medicine asks why this change happened, and provides at least two possible answers.

The first is that there has been a sea change in medical and social sensibility. Nowadays, doctors feel constrained to take patients at their word: a patient is in pain if he says he is because he is supposedly the best authority on his own state of mind and the sensations that he feels. This certainly meant that at the hospital where I worked you could see patients, allegedly with severe and incapacitating back pain, skipping up the stairs and returning with their prescriptions for the strongest analgesics to treat their supposed pain. In the new dispensation, doctors were professionally bound to believe what the patients said, not what they observed them doing.

The automatic credence placed in what a patient says — or credulity, if you prefer — is deemed inherently more sympathetic than a certain critical or questioning attitude towards it. And since it is now possible, indeed normal, for patients to report on doctors adversely and very publicly via the internet and other electronic media, doctors find themselves in a situation in which they must do what patients want or have their reputations publicly ruined. When in doubt, then, prescribe.

The second reason proposed in the editorial for the liberal prescription of addictive analgesics, even to those patients whom the doctor knows or suspects to be abusing them, is economic. Prescription is quick and lucrative, while encouraging the patient to forego his drugs is difficult, time-consuming, and ill-paid. The doctor cannot afford, at least if he wants to preserve his income, to spend a lot of time with any one patient, and addicts denied their drugs can easily use up hours of the doctor’s time.

Practically all doctors (apart from pathologists) must now take courses in pain management, but not in the addiction to which the proliferation of these courses seem to have led. The author of the editorial, who is from Stanford, believes that not until doctors accept addiction as a disease, chronic and relapsing like, say, asthma, and are duly rewarded for treating it, will the problem be solved. The trouble is that addiction is not a disease like any other, any more than is burglary or driving too fast. Medical consequences do not make a disease.

Nevertheless, the editorial draws attention to the pressures on doctors to prescribe what they know in their hearts they ought not to prescribe. It omits, however, three factors: the unprecedented commercial promotion of strong painkillers by drug companies, the doctor’s physical fear of his patient

s (assaults by the disgruntled are not uncommon), and a strong dislike of scenes in his office. I remember very well that when I refused to prescribe either strong painkillers or other addictive drugs such as benzodiazepines (for example, valium), some of the patients would start to shout that I was not a doctor but a murderer. This was idiotic, of course, but such scenes are wearing on the nerves. Many doctors just give in prophylactically, as it were.

5. As life expectancy increases will the elderly become too much of a burden on society?

Eighty really is the new 70…

At dinner the other night, a cardiologist spoke of the economic burden on modern society of the elderly. This, he said, could only increase as life expectancy improved.

I was not sure that he was right, and not merely because I am now fast approaching old age and do not like to consider myself (yet) a burden on or to society. A very large percentage of a person’s lifetime medical costs arise in the last six years of his life; and, after all, a person only dies once. Besides, and more importantly, it is clear that active old age is much more common than it once was. Eighty really is the new seventy, seventy the new sixty, and so forth. It is far from clear that the number of years of disabled or dependent life are increasing just because life expectancy is increasing.

There used to be a similar pessimism about cardiopulmonary resuscitation. What was the point of trying to restart the heart of someone whose heart had stopped if a) the chances of success were not very great, b) they were likely soon to have another cardiac arrest and so their long-term survival rate was low and c) even when restarted, the person whose heart it was would live burdened with neurological deficits caused by a period of hypoxia (low oxygen)?

A paper in the New England Journal of Medicine examines the question of whether rates of survival of cardiopulmonary resuscitation have improved over the last years and, if so, whether the patients who are resuscitated have a better neurological outcome.

The authors entered 84,625 episodes of cardiac arrest (either complete asystole or ventricular tachycardia) among in-patients in 374 hospitals in their study, which covered the years 2000 to 2009. They found that, between those two years, the rate of survival to discharge from the hospital for patients who had been resuscitated increased from 13.7 to 22.3 percent. This improvement was very unlikely to have been by chance alone. Moreover, the percentage of those who left the hospital with clinically significant neurological impairment as a result of their cardiac arrest decreased from 32.9 percent in 2000 to 28.1 percent in 2009. Extrapolating to figures in the United States as a whole, where there are about 200,000 cardiac arrests per year among hospital in-patients, the authors estimate that 17,200 extra patients survived to discharge in 2009 compared to 2000, and 13,000 extra with no significant neurological disability – if, that is, the 384 hospitals were representative of U.S. hospitals as a whole, which they may not have been.

Of course, it is usually possible to extract pessimistic data from the most optimistic data. The study could have emphasized that, thanks to improvement in cardiopulmonary resuscitation, 4,200 extra patients with significant neurological disability were being discharged from hospitals annually, a burden, as the dinner guest would have put it, on society.

In addition, only 22.3 percent of patients given CPR survived to discharge while 54.1 percent responded initially to it. This means that in 2009, 31.8 percent of patients resuscitated died in the hospital after initial success; in 2000, the figure had been only 29.0 percent. Presumably patients who responded initially to resuscitation but subsequently died used up a lot of expensive resources in the meantime.

The authors are cautiously optimistic. They admit that the improvement might have been due to something other than better technique of CPR: a change in the nature of the patients having it, for example. Nevertheless, these results are more encouraging than those of a previous study, which showed no improvement in survival of CPR patients in the Medicare system between 1992 and 2005.

6. Is marijuana a medicine?

Or is a new generation of addicts emerging?

No doubt I have forgotten much pharmacology since I was a student, but one diagram in my textbook has stuck in my mind ever since. It illustrated the natural history, as it were, of the way in which new drugs are received by doctors and the general public. First they are regarded as a panacea; then they are regarded as deadly poison; finally they are regarded as useful in some cases.

It is not easy to say which of these stages the medical use of cannabis and cannabis-derivatives has now reached. The uncertainty was illustrated by the on-line response from readers to an article in the latest New England Journal of Medicine about this usage. Some said that cannabis, or any drug derived from it, was a panacea, others (fewer) that it was deadly poison, and yet others that it was of value in some cases.

The author started his article with what doctors call a clinical vignette, a fictionalized but nonetheless realistic case. A 68-year-old woman with secondaries from her cancer of the breast suffers from nausea due to her chemotherapy and bone pain from the secondaries that is unrelieved by any conventional medication. She asks the doctor whether it is worth trying marijuana since she lives in a state that permits consumption for medical purposes and her family could grow it for her. What should the doctor reply?

The scientific evidence about the medical benefits of cannabis is suggestive but not conclusive, in large part because governments have placed legal obstacles in the way of proper research, but also because the smoke of marijuana contains so many compounds that need to be tested individually. But it seems that cannabis can relieve nausea (one of the most unpleasant of all symptoms when it is persistent) and some kinds of pain. Its side effects in this context are unlikely to be serious or severe. To worry about the addictive potential of a drug, for example, when the patient is unlikely to survive very long is clearly absurd, though one doctor did raise the question. I remember all too clearly the days when patients who were dying were denied pain relief by heroin because it was supposedly so addictive.

As soon as the subject of cannabis comes up, passions are aroused that seem to make it impossible for people, even doctors, to stick to the point. One correspondent pointed out that a half of American schoolchildren had tried cannabis, but this was irrelevant even to the irrelevant point he was trying to make. No one, after all, suggests that there should be no speed limits because almost every driver breaks the law within thirty seconds of starting out. There may be arguments for the decriminalization of cannabis but this is not one of them.

The author of the article comes to the conservative conclusion that doctors should prescribe cannabis medicinally only when all other treatment options (including the much more dangerous oxycodone) have failed. This might seem contradictory. If cannabinoids should prove as effective in some situations as opioids they would be drugs of first rather than last resort.

But it is unlikely in any case that all doctors will remain conservative for long or will prescribe cannabinoids only as a last resort. In Britain in the 1950s doctors were permitted to prescribe heroin for heroin addicts. A very small number of them began, either for payment or because they believed that the “recreational” use of heroin was harmless or even a human right, to prescribe very liberally. The number of addicts increased very quickly, but whether it would have done so anyway is now impossible to say.

7. Is nutrition really that important for good health?

The Mediterranean Diet put to the test.

Having recently returned from Madrid, I confess that I saw little evidence of the Mediterranean diet being consumed there (apart, that is, from the red wine): though, of course, Madrid is in the middle of the peninsula, far from the Mediterranean. Perhaps things are different on the coast. Nevertheless, at over 80 years, Spain has one of the highest life expectancies in the world.

Is this because of the much-vaunted Mediterranean diet? Spanish research recently reported in the New England Journal of Medicine provides some – but not very much – support for the healthiness of that diet.

The researchers divided 7000 people aged between 55 and 80 at risk of heart attack or stroke because they smoked or had type 2 diabetes into three dietary groups. One group (the control) was given dietary advice concerning what they should eat; the two other two groups were cajoled by intensive training sessions into eating a Mediterranean diet, supplemented respectively by extra olive oil or nuts, supplied to them free of charge.

They were then followed up for nearly five years, to find which group suffered from the most (or the least) heart attacks and strokes. The authors, of whom there were 18, concluded:

Among persons at high cardiovascular risk, a Mediterranean diet supplemented with extra-virgin olive oil or nuts reduced the incidence of major cardiovascular events.

The authors found that the diets reduced the risk of the subjects suffering a heart attack or stroke by about 30 percent. Put another way, 3 cardiovascular events were prevented by the diet per thousand patient years. You could put it yet another way, though the authors chose not to do so: 100 people would have to have stuck to the diet for 10 years for three of them to avoid a stroke or a heart attack. This result was statistically significant, which is to say that it was unlikely to have come about by chance alone, but was it significant in any other way?

Such significance is in the eye of the beholder, or rather in the opinion of the patient. There are reasons for liking the Mediterranean diet other than health reasons, namely aesthetic ones; and this is important, because if it were discovered that a diet of raw cabbage and boiled fish without salt produced the same result, would anyone other than a masochist contemplate adhering to it? After all, only hypochondriacs turn their meals into medicines and nothing else.

Even if the study had found much more dramatic results than it did find, it would have been severely limited in its application. It does not follow from the fact that the diet prevents stroke or heart attack in people at relatively high risk of having one in the first place that it would prevent either or both of them in people at much lower risk.

On the other hand, it is also possible that the Mediterranean diet would have prevented the development of the high risk in the first place if taken early enough in life. It is true that Mediterraneans, on the whole, have fewer heart attacks and strokes than people of more northerly climes; what is not so clear is how far Mediterraneans actually consume a Mediterranean diet. The idea that diet is the sovereign way to health is a very old one, but given the fact that nations of the most diverse dietary habits are among those with the highest life expectancy, it might not be correct.

In any case, the Spanish research involved the monitoring and one might almost say the badgering of patients to get them to comply with the diet. What is found in the context of a trial is not necessarily transferable to “real” life.

8. Is drug addiction really just like any other illness?

Who can imagine an organization called Arthritics Anonymous whose members stand up and say “My name is Bill, and I’m an arthritic”?

Sometimes a single phrase is enough to expose a tissue of lies, and such a phrase was used in a recent editorial in The Lancet titled “The lethal burden of drug overdose.” It praised the Obama administration’s drug policy for recognizing “the futility of a punitive approach, addressing drug addiction, instead, as any other chronic illness.” The canary in the coal mine here is “any other chronic illness.”

The punitive approach may or may not be futile. It certainly works in Singapore, if by working we mean a consequent low rate of drug use; but Singapore is a small city state with very few points of entry that can hardly be a model for larger polities. It also seems to work in Sweden, which had the most punitive approach in Europe and the lowest drug use; but the latter may also be for reasons other than the punishment of drug takers. In most countries (unlike Sweden) consumption is not illegal, only possession. That is why there were often a number of patients in my hospital who had swallowed large quantities of heroin or cocaine when arrest by the police seemed imminent or inevitable. Once the drug was safely in their bodies (that is to say, safely in the legal, not the medical, sense), they could not be accused of any drug offense. Therefore, the “punitive approach” has not been tried with determination or consistency in the vast majority of countries; like Christianity according to G. K. Chesterton, it has not been tried and found wanting, it has been found difficult and left untried.

But the tissue of lies is implicit in the phrase “as any other chronic illness.” Addiction is not a chronic illness in the sense that, say, rheumatoid arthritis is a chronic illness. If it were, Mao Tse-Tung’s policy of threatening to shoot addicts who did not give up drugs would not have worked; but it did. Nor would thousands of American servicemen returning from Vietnam where they had addicted themselves to heroin simply have stopped when they returned home; but they did. Nor can one easily imagine an organization called Arthritics Anonymous whose members attend weekly meetings and stand up and say, “My name is Bill, and I’m an arthritic.”

Some people (but not presumably The Lancet) might say that it hardly matters what you call addiction. But calling it an illness means that it either is or should be susceptible of medical treatment. And one of the most commonly used medical “treatments” of heroin addiction is a substitute drug called methadone. According to The Lancet, though, 414 people died of methadone overdose in Great Britain in 2012, while 579 died of heroin and morphine overdose. Since fewer than 40 percent of heroin and morphine addicts are ‘”treated” with methadone, treatment probably results in more death than it prevents, at least from overdose. Moreover, some of the people it kills are the children of addicts.

The United States, with five times the population of Great Britain, has nearly fifteen times the number of drug-related deaths (38,329 in 2010). This, however, is not because illicit drug use is much greater than in Britain. It is because doctors in America are prescribing dangerous opioid drugs in huge quantities to large numbers of patients who mostly do not benefit from them. More people now die in the United States of overdoses of opioid drugs obtained legally than are murdered. It is not true, then, that all the harm of opioid misuse arises from its illegality.

Recently I asked a group of otherwise well-informed Americans whether they had heard of the opioid scandal. They had not.

9. Are obese children victims of child abuse?

Nothing illustrates better than child abuse the tendency of ill-defined but nevertheless meaningful concepts to spread beyond their original signification to include more and more phenomena.

Recently I was asked by BBC radio to discuss (in three minutes flat) the question of whether morbidly obese children were the victims of abuse by their parents. By coincidence, the Lancet of that week published an editorial on the psychological abuse of children and what doctors could or should do about it.

Nothing illustrates better than child abuse the tendency of ill-defined but nevertheless meaningful concepts to spread beyond their original signification to include more and more phenomena. The evolutionist Richard Dawkins has even suggested that to bring up children in any particular religious faith is a form of child abuse, since the child’s subsequent freedom to choose his beliefs according to the evidence is thereby impaired: in which case the history of all previously existing societies is not that of class struggles, as the Communist Manifesto has it, but of child abuse.

The editorial in the Lancet referred to a report on the psychological maltreatment of children by the American Academy of Pediatrics, the general drift of which was that such maltreatment is protean in nature and has bad effects upon children later in life, from mental illness and criminality to inability to form close relationships and low self-esteem. Maltreatment is dimensional rather than categorical: at what point does “detachment and uninvolvement” give way to “undermining psychological autonomy”?

Likewise “restricting social interactions in the community” can be a form of maltreatment, but so can failure to do so, in so far as association with undesirables might “encourage antisocial or developmentally inappropriate behavior.” Parenthood is thus a constant navigation between various Scyllas and Charybdises, and is rarely entirely successful. Surveys show that about one in sixteen people in Britain and America consider themselves to have been the subject of psychological abuse in childhood. I count myself as among the abused.

The report considers what can be done to reduce the psychological abuse of children, both on a population and an individual level. It is rather coy about what kind of families are most likely to maltreat their children psychologically, perhaps because this would produce yet one more stick with which to beat the poor; and as for the “treatment” of individuals, the report supposes that most psychological maltreatment is the result of error or misunderstanding rather than of malice and depravity. Thus, it can be righted by a little training and exhortation. Doctors are uncomfortable when confronted by the intractability of human malice, and perhaps it is as well that they should be.

I read something in the report that took me back to my student days:

Severe forms of psychological deprivation can be associated with psychosocial short stature, a condition of short stature or growth failure formerly known as psychosocial dwarfism.

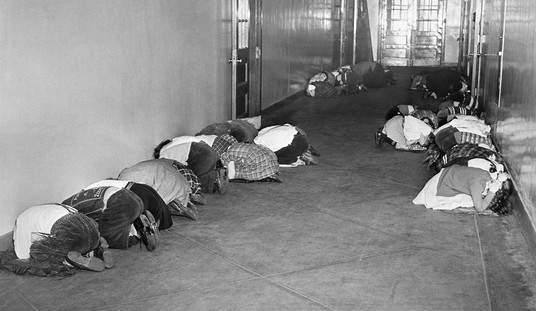

Back in the early ’70s, we were taught that the failure of abused children to grow normally through what was then called “maternal neglect” was caused by an insufficiency of food. A horrific experiment had been performed in which maternally deprived children were taken from their mothers and divided into three groups: those given food and affection, those given food but no affection, and those given affection but not enough food. The children in the first two groups grew in an accelerated fashion and by the same amount, those in the third did not. The horrible conclusion was that affection was not necessary to growth.

I am very glad if this is not so. I note, however, the report’s weasel words “associated with” rather than the unequivocal “caused by.” And speaking for myself – and only for myself – I think the psychological maltreatment that I suffered as a child did me some good as well as harm. It put a certain iron in the soul as well as gave me certain defects of character.

10. Should you vaccinate your kids?

Since rotavirus immunization of infants was introduced in the United States, hospital visits and admissions have declined by four fifths among the immunized.

There is no subject that provokes conspiracy theories quite like the immunization of children. That innocent, healthy creatures should have alien substances forcibly introduced into their bodies seems unnatural and almost cruel. As one internet blogger put it:

Don’t take your baby to get a shot, how do you know if they tell the truth when giving the baby the shot, I wouldn’t know because all vaccines are clear and who knows what crap is in that needle.

The most common conspiracy theory at the moment is that children are being poisoned with vaccines to boost the profits of the pharmaceutical companies that make the vaccines. No doubt such companies sometimes get up to no good, as do all organizations staffed by human beings, but that is not also to assert that they never get up to any good.

A relatively new vaccine is that against rotavirus, the virus that is the largest single cause of diarrhea in children. In poor countries this is a cause of death; in richer countries it is a leading cause of visits to the hospital but the cause of relatively few deaths.

Since rotavirus immunization of infants was introduced in the United States, hospital visits and admissions have declined by four fifths among the immunized. However, evidence of benefit is not the same as evidence of harmlessness, and one has the distinct impression that opponents of immunization on general, quasi-philosophical grounds, almost hope that proof of harmfulness will emerge.

A study published in a recent edition of the New England Journal of Medicine examined the question of one possible harmful side-effect of immunization against rotavirus, namely intestinal intussusception, a condition in which a part of the intestine telescopes into an adjacent part, and which can lead to fatal bowel necrosis if untreated.

The authors compared the rate of intussusception among infants immunized with two types of vaccine between 2008 and 2013 with that among infants from 2001 to 2005, before the vaccine was used. There is always the possibility that rates of intussusception might have changed spontaneously, with or without the vaccine, but the authors think that this is slight: certainly there is no reason to think it.

They found that there were 6 cases of intussusception after 207,955 doses of one kind of vaccine, whereas only 0.72 cases would have been expected from the 2001 – 2005 figures. Therefore the risk of intussusception increased 8.4 times after immunization.

The authors found no such increase after the use of another type of vaccine, with 8 cases after 1,301,801 doses administered instead of the expected 7.11. This difference was too small to be statistically significant; it might easily have arisen by chance. However, another study of this vaccine, from Australia rather than the United States, suggested that the size of the risk with this vaccine was similar to that of the other.

Therefore, on the balance of probability, immunization against rotavirus does cause intussusception in infants and is therefore not entirely harmless. To that extent the conspiracy theorists are correct. But good in medicine seldom comes without the possibility of harm (the reverse, alas, is not true), and if doctors never prescribed anything that might do harm as well as good they would not prescribe anything at all. The good must always be weighed against the harm and in this case the balance seems overwhelmingly on the side of the good. The very fact that such huge numbers of cases have to be treated to reveal any harm at all is an indication that, in numerical terms at least, it cannot be very great.

Join the conversation as a VIP Member