Recent studies have reported a worrisome decline in IQ scores in Western nations over the last decades, a reversal of the once-hopeful Flynn Effect (named after the late philosopher and psychologist James R. Flynn) which posited a growth in cognitive abilities for much of the 20th Century. Now the Flynn Effect seems to have reversed, leading to predictions of a general dumbing down of selective populations. Other studies report that IQ erosion is not confined to this century but that IQ has dropped by an average of 14.1 percent over the last century. As Evan Horowitz writes for NBC News, “A range of studies using a variety of well-established IQ tests and metrics have found declining scores across Scandinavia, Britain, Germany, France and Australia.”

Horowitz argues that the plummet in cognitive abilities “could not only mean 15 more seasons of the Kardashians, but also… fewer scientific breakthroughs, stagnant economies and a general dimming of our collective future.” Flynn himself, who did the original research on the eponymous effect, has stated that “The IQ gains of the 20th century have faltered.” Flynn’s more optimistic Are We Getting Smarter: Rising IQ in the Twenty-First Century was published in 2012; his subsequent findings led in an opposite direction.*

The brainchild of French psychologist Alfred Binet, the IQ construct is a controversial issue with many different interpretations and applications. Charles Spearman proposed the variable notion of a g factor, or general intelligence measure, responsible for overall performance on various mental ability tests such as memory retention, spatial processing, and quantitative reasoning. The g factor has been compared to general athletic ability which allows a person to excel in different fields and activities. There has been vigorous debate over the strict equivalency between IQ scores and intelligence, but there is broad agreement on a general waning of intelligence or, from a clinical perspective, an ebbing of IQ scores. Of course, smart people can sometimes do poorly on IQ tests and obtuse people can sometimes rank high on aspectual tiers of these tests. But the consensus appears to be that the correlation approximately holds while allowing for scalene anomalies. In effect, the g factor is eroding.

One recalls MIT economist Jonathan Gruber, the architect of Obamacare, who referred to “the stupidity of the American voter” as helping him to pass the controversial law. One wonders if Gruber ever heard of Swiss psychologist Jean Piaget’s test results purporting to show that “the rot starts at the top.” This would implicate Gruber and his cohort in the experience of what Piaget calls horizontal décalage, which stymies the application of cognitive functions and logical operations to extended tasks. In other words, Gruber et al. are also stupid, gradually destroying the very society that enabled them to flourish. But the rot can also start at the bottom, as a combination of generalized mental vacancy and low-to-no-information voters furthers cultural and social degeneration. As Morris Berman remarks in The Twilight of American Culture, “A society cannot function if nearly everyone in it is stupid.”

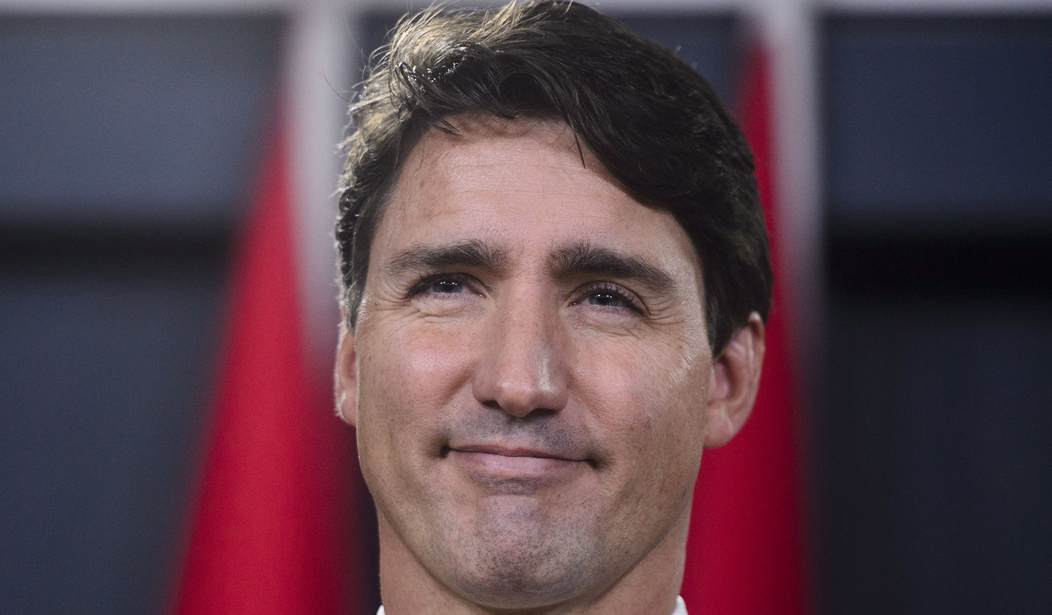

Why should we be surprised that an American president should pronounce “corpsman” as “corpseman”? Or that a Canadian prime minister says “peoplekind” in lieu of “mankind”? Or that a Washington, D.C., mayor and his staff should have objected to a perfectly good word like “niggardly”? Or that a Methodist pastor and Congressman should follow the exclamation “Amen” with “a-woman,” when an ordained minister should surely know that “Amen” is an acronym for the Hebrew אֵל מֶלֶךְ נֶאֱמָן (El melech neeman: “Lord and faithful King”)—or, as some scholars think, a calque for the Aramaic “so be it”? One can multiply these gaffes, misnomers, and malapropisms indefinitely among those who should know better—and that is merely scratching the surface. The dumbing down phenomenon is virtually encyclopedic in heft and extent.

Not the Onion: Democrat Ends Prayer With ‘Amen. … and A-Woman’

One sees the same intelligence deficit in the names chosen for some of our major social media networks. “YouTube” is cringe-worthy—just say it dispassionately to yourself. “Facebook” is a ridiculous moniker, as well as a dubious platform: as Niall Ferguson quips in The Great Degeneration, Facebook is “a vast tool enabling like-minded people to exchange like-minded opinions about, well, what they like.” Then there’s “Twitter.” A conversation between human beings is compared to birds twittering on a digital branch—the implicit message is that communicants are bird-brained. (Contrast to such infelicity a beleaguered platform like “Parler” with its French connotation of real speech and an analogy to a living room where people gather to converse amicably and share ideas.) The Apple logo—an apple with a bite taken out of it—is the fruit of pure bathos and corporate stupidity, inadvertently reminding us of the primal sin in the Garden of Eden and warning us about the perilous quest for knowledge that tantalizes on another digital tree. “Think different” is thus contra-indicated, an original sin. Apple, seriously?

Top or bottom doesn’t seem to matter. In his spy thriller Early Warning, Michael Walsh comments about government officials who, presumably “the best minds of the Republic,” are merely a “collection of hacks, time-servers, and affirmative-action appointees” whose advancement depends heavily on nepotism. “It was really pathetic, when you thought about it: that more than two centuries of American history had come to this.” Believe it. An American congressman fears the island of Guam will capsize. So-called “ambush journalist” Jesse Watters in Watters World interviews young university students; the level of ignorance, functional illiteracy, and smug self-esteem he uncovers is enough to turn the specter of our cultural practices, general knowledge, and university system into a cosmic joke.

And so it goes. A London community activist, asked about removing a Churchill statue during the summer of BLM love, admits she hasn’t “personally met” Winston Churchill. A swarm of Twitter users condemns Tampa Bay Buccaneers QB Tom Brady as “racist” for defeating half-black Kansas City Chiefs’ QB Patrick Mahomes on Super Bowl LV during Black History Month—the fact that the great majority of Brady’s teammates are black and are clearly Brady enthusiasts seems to have escaped their attention. Major economic and energy policies seem planned not by cerebral giants but by weed-addled pubescents. Bill Gates, for example, wants to pepper the sky with aerosols to reflect sunlight out of the atmosphere and initiate global cooling—the risks are incommensurable and likely irreversible. Gates has what we might call “sector-intelligence” and might do well on segments of the Stanford-Binet IQ test, but I wouldn’t bet on his g score. The travesty of intelligence, prudence, and wisdom is beyond calculation, and it is only getting worse as IQ continues to slip down the great chain of thinking. This is the world that the classic film Idiocracy extravagantly punctures.

Egg on Their Faces: 10 Climate Alarmist Predictions for 2020 That Went Horribly Wrong

Why this should be is anybody’s guess. No one really knows. Various theories have been proposed to account for accelerating neural descent, ranging from the Dewey-inspired “progressive education” agenda working its leveling passage from the turn of the 20th century to the decrepit public schools and failed universities of the present day; to the softening effect of prolonged affluence and ease on a culture; to the debilitating influence of “smart” technology that performs our cognitive functions for us; to the assumption that women of higher intelligence are having fewer children, implying that women of lower intelligence are driving population growth; to the effects of increased media exposure and the consequent lessening of reading; to the emergence of the vices of envy and resentment owing to radical egalitarianism and the rancor of the under-performing against the skilled, hard-working, and successful, a dynamic cogently analyzed by Dinesh D’Souza in Stealing America; or to the merely inescapable fact of decay: as Robert Frost wrote, “Nothing gold can stay.” One thinks, too, of poet Gerard Manley Hopkins’ remark in his Journal: “From much, much more; from little, not much; and from nothing, nothing.” Whatever the cause or causes may be, intellectual deterioration seems to be the case.

What, then, is to be done? We need to go to literature to contemplate possibilities for restoration. The problem, says Barry Lopez in Arctic Dreams, is that “The good minds still do not find each other often enough.” In his reflections on culture In Bluebeard’s Castle, George Steiner imagined a future of small, eremitic clusters of intellectual light dotting an arid landscape, recycling Max Weber’s notion of frail enclaves of enlightenment as the last resort of a civilization sinking into darkness. Walter M. Miller Jr.’s classic A Canticle for Leibowitz portrayed an obscure abbey in the Utah desert where historical knowledge is kept alive and preserved from the “Simpletons,” even if it’s only a sacred shopping list or a mysterious blueprint for circuit design. “Let us change the icons,” wrote Will Durant in The Greatest Minds and Ideas of All Time, “and light the candles.”

Berman calls this the “monastic option,” but he does not regard it as an assembly of cenobites residing in a physical plant somewhere in the outback. Instead, it consists of a disparate collection of individuals, “cultural nomads,” who may not know one another but are dedicated to a life of private decency, “the disinterested pursuit of truth, the cultivation of art [and] the commitment to critical thinking.” The “new monks” derive from and support “traditions of craftsmanship, care, and integrity, preservation of canons of scholarship, critical thinking, individual achievements and independent thought.” Their purpose is “to transmit a memory trace of what a culture can be about.”

It’s a daunting task. The number of people incapable of lucid argument and civil debate, whether Internet trolls, social media vulgarians, angry progressivists, media ignoramuses and intellectually challenged political leaders, is legion. It is therefore by no means astonishing that the greatest civilization the world has ever known, the Judeo-Christian West, is subsiding into a state of cognitive expiry, prone to fantasies and delusions, unable to confront and parse the reality of the world, oblivious to the symbiosis of man, history and nature, distracted by pseudo-scientific baubles, bereft of spiritual substance, and foreign to the very idea of truth.

In Social and Cultural Dynamics, Pitirim Sorokin, one of the great thinkers of our time, distinguished between “ideational” cultures, which are knowledge-and-spiritually focused, and “sensate” cultures, which are primarily informational and materialistic, the latter eventually devolving into a condition in which coercion, fraud, debasement of the creative impulse, family breakdown, and the encroachment of “untruth” into the human conscience (read: political correctness, fake news, electoral debauchery) are paramount. The latter is our present cultural home, lacking reflective capacity and experiencing a downtrend in clarity of thought and general percipience, shaving off IQ points as clarity and percipience drop. The concept of intelligence is complex and multifactorial, but if by “intelligence” we mean something like the ability to see the world as it is, to understand context, and to act in ways proven to be beneficial over time, then, according to Sorokin, intelligence is likely to decline in the latter stages of a “sensate” age.

The decline of intelligence—moral rectitude and creative exuberance are collateral casualties—is now in full throttle. The exceptions to the debacle—monks, nomads, people of integrity, people capable of common sense, the classically educated—represent the only viable hope for a new “ideational” age to arise out of the rubble of a “sensate” disaster. It may take another century to bring about what Sorokin called “the turn,” the slow ascent up the IQ ladder, which is cold comfort indeed. But I suspect it’s the only real comfort we have.

————————-

* The obituary in The New York Times tells only the sunnier version of the story; it omits the more alarming results of Flynn’s later research. As to be expected, the Gray Lady has grown increasingly wizened as the sensate age proceeds.

Join the conversation as a VIP Member