Never before has a presidential election seen a greater contrast in the attitudes of the major candidates towards climate change.

Hillary Clinton told delegates at July’s Democratic National Convention that man-made climate change is “an urgent threat and a defining challenge of our time.” Yet Donald Trump calls Clinton’s approach an “extreme, reckless anti-energy agenda,” and he told Fox News on July 26 that man-made climate change “could have a minor impact, but nothing, nothing [comparable] to what they’re talking about.”

To determine which position is most reasonable, and what — if any — climate change mitigation policy is needed, we need a way of properly assessing the overall risk of man-made climate change. It is not enough to simply say that the consequences of catastrophic climate change would be so dire that any and all actions to avert it are justified. We need to actually take into account the probability of such events occurring in the foreseeable future.

We conduct risk assessment in everyday life, of course. Yet for some reason, we don’t conduct it on this issue.

When we go for a walk, we risk being hit by a truck, a falling tree, or lightning, events that would obviously be personally catastrophic if they actually came about. But we judge that — if normal safety precautions are taken — the likelihood of these things happening is so low that we have no qualms about leaving home.

The same approach should obviously apply to public policy formulation. If risk assessment only involved responding to possible outcomes, then the nations of the world would be building an asteroid defense system. After all, a large asteroid impact could destroy all life on Earth, perhaps even shatter the planet itself, a far worse scenario than even the most extreme global warming forecasts.

But scientists judge that the likelihood of a significant impact in the foreseeable future is far too small to justify spending trillions of dollars on the issue.

So — while we do watch the skies for possible cosmic threats — we dedicate the majority our security dollars to dealing with known, more immediate problems such as crime, terrorism, and local pollution.

Dealing with the possibility of catastrophic human-caused climate change should result in a similar analysis as that of a possible asteroid strike. Here’s why: The portion of climate change that is due to human activity must have been very small to date.

The United Nations Intergovernmental Panel on Climate Change estimated in their latest Assessment Report that surface temperature, averaged over all land and ocean surfaces, increased only 1.53 degrees Fahrenheit between 1880 to 2012.

Further, a significant portion of that rise must have been part of a natural climate cycle, as the Earth had been exiting the Little Ice Age.

So, man’s contribution to the change that has occurred over those 132 years is something less than 1.53 degrees Fahrenheit — anything but catastrophic.

But hold on — stating a 132-year long change in terms of hundredths of a degree makes no sense at all. Why? Over most of those 132 years, the instruments we used to measure temperature could only measure to an accuracy of one entire degree.

Did you hear governments and media claim recently that 2014 and 2015 were “the hottest years on record”?

Well, those reports claimed that 2014 broke the record by seven hundredths of a degree Fahrenheit, and that 2015 broke the record by 29 hundredths of a degree Fahrenheit.

Changes in sea level rise and the incidence and severity of extreme weather have also been unremarkable over the past century. Therefore, like temperature rise, the contribution to these changes from human activity must have been very small indeed.

Man-made climate change concerns are based solely on possible future events, events that are, by definition, not yet known. University of Western Ontario applied mathematician Dr. Chris Essex, an expert in the mathematical models that are the basis of climate change concerns, explains:

Climate is one of the most challenging open problems in modern science. Some knowledgeable scientists believe that the climate problem can never be solved.

Wait — why have we never heard that before?

It turns out we should have, as even the United Nations Intergovernmental Panel on Climate Change (IPCC) included it in its Third Assessment Report:

The climate system is a coupled non-linear chaotic system, and therefore the long-term prediction of future climate states is not possible.

So how does one develop rational climate change policy when the probability of catastrophic outcomes is unknown, and perhaps even unknowable?

In his 2004 Ph.D. thesis, “Astronomical Odds – A Policy Framework for the Cosmic Impact Hazard,” Geoffrey S. Sommer laid out a rational hierarchy of responses to the possibility of a catastrophic asteroid or comet impact with our planet, something he considered “an extreme example of a low-probability, high-consequence policy problem.”

He identified three levels:

Level 1: Survey — Surveillance and tracking

Level 2: Characterization — determining the characteristics of the object found in level 1

Level 3: Mitigation — interception (asteroid defense systems, deflection, etc.), civil defense and consequence management after impact (a form of adaptation)

Sommer’s approach is similar to that often found in military operations. Surveillance is used to detect a threat; the detected threat is then tracked and, to the extent possible, characterized; mitigation actions are, if deemed necessary, taken to neutralize the threat. Surveillance and tracking provide support for the mitigation function.

Sommer’s hierarchy of responses should also be used in the formulation of public policy concerning human-caused climate change.

The three levels would be applied as follows:

Level 1: Surveillance and tracking – Expand and greatly improve weather and climate sensing systems, on the ground and from orbit. Continue to determine past climate change through geologic studies of proxies of climate in Earth’s history.

Level 2: Characterization – Research to determine if current changes are dangerous, and, if so, if they are caused significantly by human activities. Continue to try to better understand the climate system so that meaningful climate models may someday be created to aid in preparation for future change.

Level 3: IF possible, practical, AND DEMONSTRATED IN LEVELS 1 AND 2 TO BE NECESSARY, mitigation.

Former University of Winnipeg professor and historical climatologist Dr. Tim Ball shows that the collection and interpretation of data required to fulfill levels 1 and 2, such measurements as temperature and precipitation, has only just begun.

Ball explains that there are relatively few weather stations of adequate length or reliability on which to base model forecasts of future climate, therefore the current predictions of climate alarmists have no validity.

The late Hubert Lamb, founder of the Climatic Research Unit at the University of East Anglia in the United Kingdom, defined the basic problem in his autobiography, Through all the Changing Scenes of Life: A Meteorologist’s Tale:

[I]t was clear that the first and greatest need was to establish the facts of the past record of the natural climate in times before any side effects of human activities could well be important.

NASA’s Goddard Institute for Space Studies (GISS) implies that today’s ground-based weather station coverage is adequate. They display weather stations in the following map, where each dot represents a single station:

Weather stations according to NASA GISS. Vast surfaces are unmeasured.

Ball replies that the problem is that each dot represents one thermometer reading for a few hundred square kilometers. Further, vast areas of the Earth have no weather stations at all.

For example, there is no data for an area of the Arctic Ocean almost the size of Russia.

Most of the Antarctic and massive areas of the oceans also have no coverage.

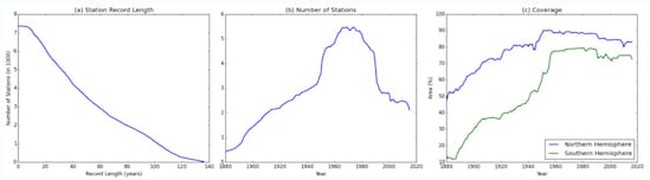

GISS — apparently unknowingly — illustrates that problem in its own graphs, as follows:

NASA GISS weather station coverage

As can be seen in the first graph, only about 1000 weather stations have records of 100 years or more.

Further, almost all of them are in heavily populated areas of northeastern U.S. or Western Europe, and are subject to “urban heat island” effect.

The second graph above shows that, after 1970, many weather stations were closed, supposedly because satellite-based measurements would soon take over. However, satellite-based measurements did not really begin until 2003-04.

The third graph above looks promising at first glance. It claims that 80% of the area of the Earth was “covered” by a weather station in the northern hemisphere, and only slightly less in the south.

However GISS considers a region adequately “covered” if there is a weather reporting station within 1200 km.

Look at the below map. According to this standard, if a weather station recorded data in Los Angeles, there would be no need for another station all the way to El Paso, Texas, and even into parts of Wyoming.

You tell me:

Does Wyoming’s winter feel like Los Angeles?

Ball responds:

[The 1200 km standard] is absurd. Yet the weather station record is still the standard for IPCC reports.

NASA GISS considers a region ‘covered’ if there is a weather reporting station within 1200 km.

This is only the beginning of our data collection problems. Ball explains:

The atmosphere is, of course, three-dimensional, so cubes, not surface areas, are the mathematical building blocks of the computer models on which the climate scare is based. Yet the amount of data above the surface is almost nonexistent.

Ball estimates that, between the surface and the atmosphere, there is data for only about 10% of the total atmosphere.

He concludes:

[A]ny discussion about the complexities of climate models including methods, processes and procedures are irrelevant. They cannot work because the simple truth is the data, the basic building blocks of the model, are completely inadequate.

Yet, it is the model output that is being used as the foundation for trillion-dollar global energy policy decisions.

If, someday, scientists had the data to fulfill the requirements of Level 1, surveillance and tracking, we would still have to complete Level 2, characterization. We would need to demonstrate that the data we had collected revealed dangerous man-made climate change before moving on to Level 3, mitigation.

We are a very long way from being able to properly characterize the threat of man-made climate change.

However, Ball explains that there is nothing in the data collected so far that even indicates a possible problem:

The climate change we have seen in the past century is no different from what we would expect to see due to a period of natural warming out of the Little Ice Age from 1350 — the late 1800s. Whatever human contribution there may be is lost in the noise of natural variability.

The UN’s ongoing My World global survey indicates that most of the world’s people agree with Trump, not Clinton.

The 9.7 million people polled so far have told the UN that, in comparison with access to reliable energy, better healthcare, government honesty, a good education, and other concerns, they don’t care about climate change.

“Action taken on climate change” rates dead last out of the 16 suggested priorities for the UN.

GOP candidates should follow Trump’s lead, making good use of reports like those of the Nongovernmental International Panel on Climate Change to clearly explain to the public that climate change is a natural phenomenon. That human influence is likely very small.

That there is no demonstrable basis for spending one billion dollars worldwide every day on climate change mitigation.

Until enough basic climate data has been collected and dangerous human-caused climate change has actually been demonstrated, all funding for mitigation should be cancelled. The world has real issues to focus on.

Join the conversation as a VIP Member